|

|

I was raised, and completed all my schooling before college, in a small town in southwest China. I’m grateful for the opportunities that followed; the path I’m on now was beyond anything I imagined growing up. 🙂

My research aims to develop efficient and scalable AI algorithms and systems. I am particularly excited about redesigning AI algorithms to be both theoretically grounded and practically efficient.

- 🤗 Scalable and Theory-grounded Optimization for Foundation Models: Pre-Training & Post-training (RL)

- 🤗 Hardware-software Co-design for Efficient AI Deployment

-

♾️ Meta AI | May '25 – Dec '25 | Research Scientist InternTopic: Theory-driven Efficient Learning for RLVR | Advisor: Dr. Yuandong Tian, Dr. Zechun Liu, Dr. Kai Sheng Tai

-

♾️ Meta AI | May '24 – Oct '24 | Research Scientist Intern

-

💡 Lightelligence Inc. | May '23 – Sept '23 | Software Research Intern

-

🧠 Google Brain | Jul '22 – Nov '22 | Student Researcher

I have published papers in top conferences in machine learning/ system/computer architecture/design automation, including MLSys, HPCA, NeurIPS, ICCV, COLM, DAC, ICCAD, and TCAD.

|

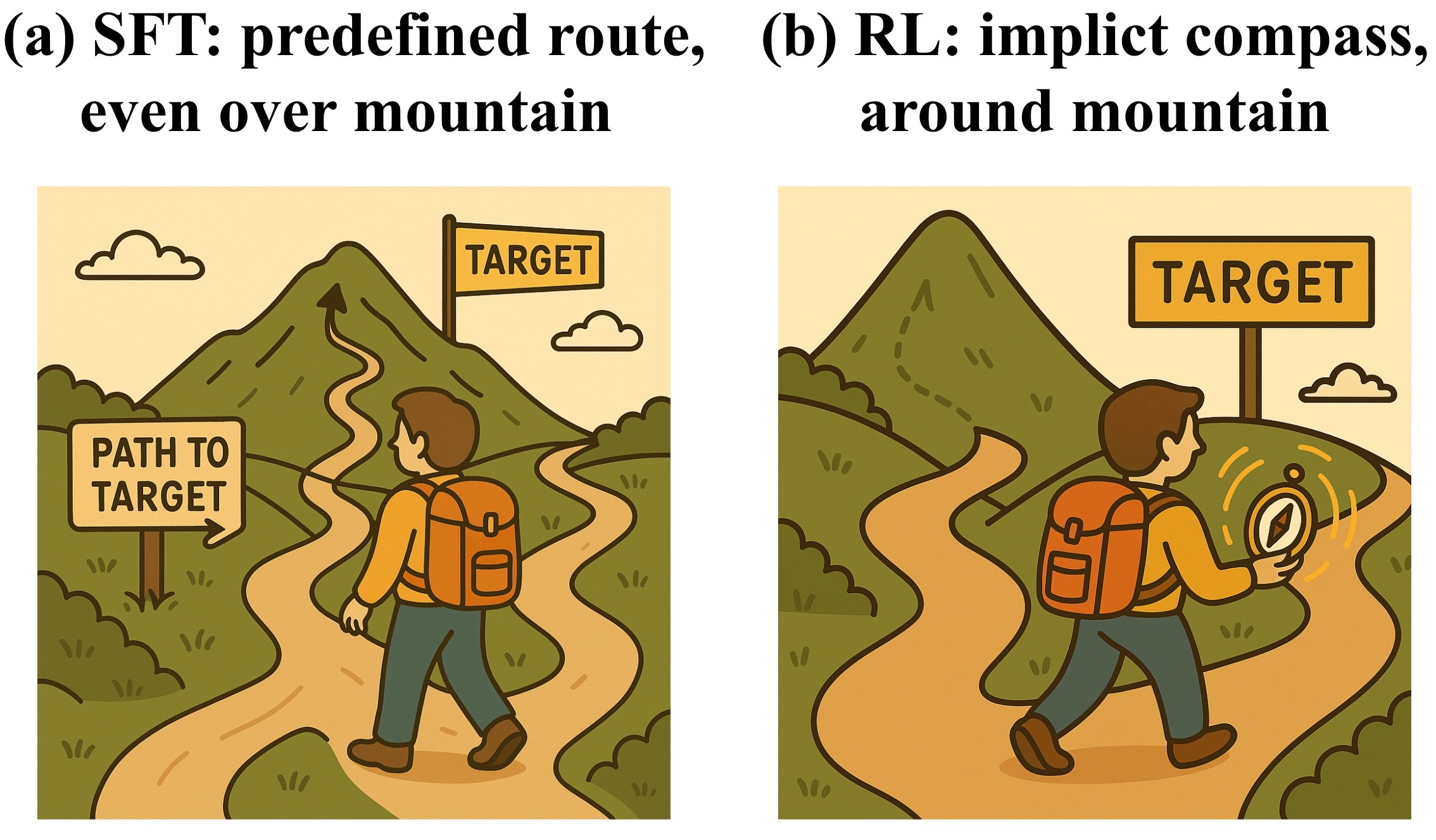

Hanqing Zhu,  Zhenyu Zhang,  Hanxian Huang,  DiJia Su,  Zechun Liu,  Jiawei Zhao,  Igor Fedorov,  Hamed Pirsiavash,  Zhizhou Sha,  Jinwon Lee,  David Z. Pan,  Zhangyang Wang*†,  Yuandong Tian*†,  Kai Sheng Tai*† Arxiv 2025. NeurIPS 2025 Workshop on Efficient Reasoning (Spotlight) [ Paper / Blog / X post / 量子位 / 新智元 ] First theory-driven RLVR study and guidance for geometry-aligned RL optimization |

|

|

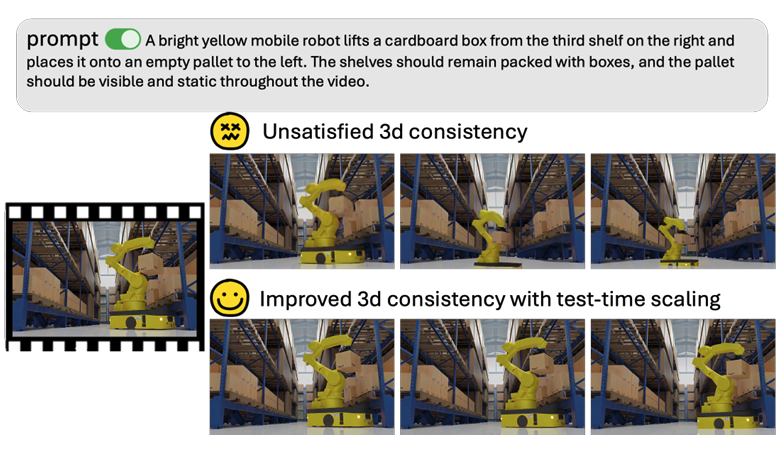

Wenyan Cong*,  Hanqing Zhu*,  Peihao Wang,  Bangya Liu,  Dejia Xu,  Kevin Wang,  David Z. Pan,  Yan Wang,  Zhiwen Fan,  Zhangyang Wang Conference on Language Modeling (COLM), 2025 [ Paper / Code ] First efficient test-time scaling for world foundation model |

|

|

Hanqing Zhu*,  Zhenyu Zhang*,  Wenyan Cong,  Xi Liu,  Sem Park,  Vikas Chandra,  Bo Long,  David Z. Pan,  Zhangyang Wang,  Jinwon Lee Conference on Machine Learning and Systems (MLSys), 2025 [ 🏆 Outstanding Paper Honorable Mention / Paper / Code / Hacker News / HuggingFace / LLaMA-Factory / FluxML / axolotl / 机器之心 ] Theory-driven scalable memory-efficient training with new-recording memory efficiency |

|

|

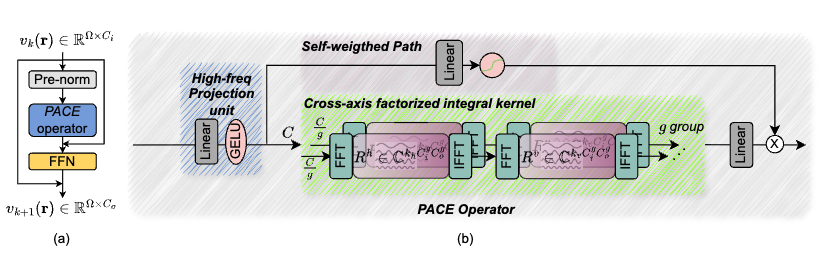

Hanqing Zhu,  Wenyan Cong,  Guojin Chen,  Shupeng Ning,  Ray Chen,  Jiaqi Gu,  David Z. Pan Conference on Neural Information Processing Systems (NeurIPS), 2024 [ Paper / Code ] Theory-grounded efficient and fast operator model for scientific simulation |

|

|

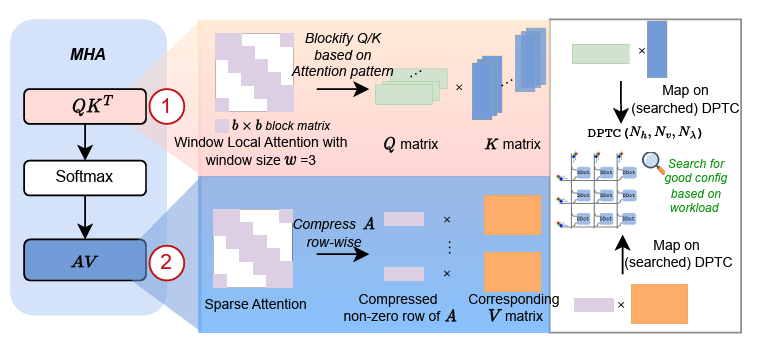

Hanqing Zhu,  Jiaqi Gu,  Hanrui Wang,  Zixuan Jiang,  Zhekai Zhang,  Rongxin Tang,  Chenghao Feng,  Song Han,  Ray T. Chen,  David Z. Pan IEEE International Symposium on High Performance Computer Architecture (HPCA), 2024 (Acceptance Rate: 18.3%) [ Paper / Code ] Hardware-software Co-design; First photonic transformer accelerator |

- Best Paper Award , CVPR AI for Content Creation Workshop, 2025

- Honorable Mention Outstanding Paper , MLSys, 2025

- ML and Systems Rising Stars (38 out of 150+), ML Commons, 2025

- DAC Ph.D. Forum, DAC 2025

- ICLR Notable Reviewer, ICLR 2025

- MLSys Student Travel Award, MLSys 2025

- Texas ECE Graduate Achievement Award, UT Austin 2024

- UT Graduate School Continuing Fellowship Nomination (1 of 2 nominees in ECE), UT Austin 2024

- 1st Place in IEEE/ACM MLCAD FPGA Macro-Placement Contest, MLCAD, 2023

- MLSys Student Travel Award, MLSys 2023

- Winner of Robert S. Hilbert Memorial Optical Design Competition, Synopsys, 2022

- DAC Young Fellow, 2021

- Shanghai Outstanding Graduate, 2020

- Hongyi Scholarship, 2019

- Outstanding Undergraduate Scholarship, 2019

- Samsung Scholarship, 2018

- Zhiyuan College Honors Scholarship, 2018

- 1st Prize, National Mathematical Contest in Modeling, Shanghai Division, 2018

- Academic Excellence Scholarship, 2017-2019

I have two lovely cats :)